Shawn Wallace explains how to get started with using AI for facial recognition in the conclusion of our CampIO blog series.

Part of our CampIO 2020 series.

Artificial intelligence and machine learning are important technologies, and we are starting to see them everywhere. Almost every device we use — from our car to our phone to wearables — has features enabled by AI or ML.

I want to be a part of this technology revolution, but I’m not a data scientist or a neural network expert. How can I get started with AI or ML? How can I learn?

You Start with an Idea

The good news: there is a path, and it starts with an idea. I had the idea to build a system that uses facial recognition to identify people who enter a room and then play entrance music for specified to them. So with this idea, I started thinking about what that solution would look like.

I would need a few things:

- A device with a camera that I could access programmatically to detect faces when people entered the room.

- A component that could match those images taken with faces to known people with entrance music.

- An application or device to play the music if a match is found.

The Device

I started by selecting an AWS DeepLens. The AWS DeepLens is a powerful learning tool that gives developers a computing platform with a built in high-definition camera that is easy to integrate with other AWS services. For the beginner, there is a large learning community that has created machine learning models and starter application code you can use to kick-start your project. For the experienced ML and AI technologist, you can create your own models and algorithms that take advantage of the AWS DeepLens’ substantial capabilities.

The Starting Point

The approach I took was to use an existing AWS DeepLens starter project that did something similar or related to what I needed and modify it to my purpose. My goal was to implement my idea and learn about the design behind ML and AI projects without having to start from scratch in an area that was new to me.

My starting point was a project that used facial and object detection to determine who in an office was drinking the most coffee and then update a Coffee Leaderboard. The system detected faces and if the person in the image was holding a coffee cup, the system would update the leaderboard. Using this as a base point for my idea gave me a head start. I would have to manage some code, but the starter project had great examples for me to follow.

The Design

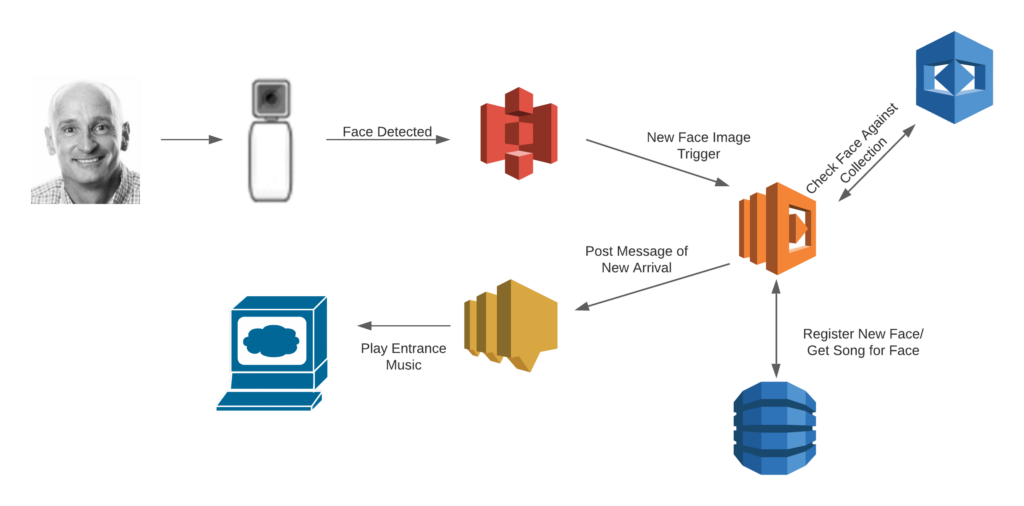

To some, cloud-native designs might look like Rube Goldberg machines due to the number of components and interactions in play. As a solution architect, I have to remind myself of the beauty of these systems in how they trade application simplicity for infrastructure complexity. These solutions do not disappoint. The composite, cloud-native design uses several AWS Services together to implement much of the solution and it requires relatively low amounts of code writing to bring it together.

Here are the AWS Services I used:

- Simple Storage Service (S3) – used to store images captured by the AWS DeepLens

- Lambda – serverless code container that executes the application code

- Rekognition – performs the facial recognition function

- DynamoDB – stores information about identified faces

- Simple Notification Service (SNS) – notifies the music playing application that it should play a song.

How It Works

The entire process kick-starts when the AWS DeepLens detects a face in its camera. The DeepLens takes a picture, draws a border around the face and saves the edited image to an AWS S3 bucket.

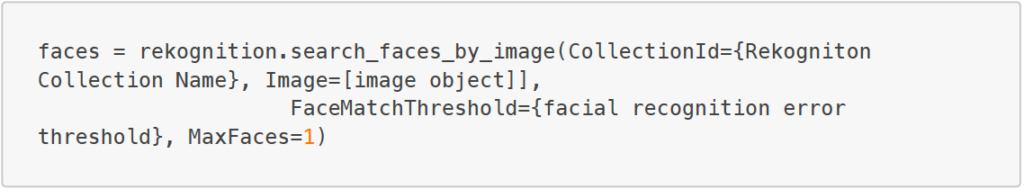

When the DeepLens saves the image in the S3 bucket, it triggers an AWS Lambda function. The purpose of the Lambda function is to grab the image from S3 and make a request to AWS Rekognition to identify the face in the image. Then it determines if the system knows that face. It does this by executing a small Python application. The Python code passes the image to Rekognition and returns an identifier.

Rekognition is a powerful image and video analysis tool. It is a proven library built on reliable models and algorithms that “require no machine learning expertise to use.” Using the tool is simple and requires one line of code.

The Lambda function then queries the DynamoDB to see if that identifier already exists. If it already exists, then the person in the image has visited before. It then returns their specific entrance song name. If the face does not exist already in the DynamoDB, it is registered. Then it returns the default entrance song name.

The Lambda creates and posts an SNS message containing pertinent information any application that subscribes to the message can consume. In this instance, a web application is polling for new messages in the SNS topic. When it identifies a visitor, the web application plays the song associated with that person.

The Future

There are many applications for facial recognition technology. For example, the OneEye Project has been adapted to do customer identification and for an Amber Alert use case.

There are also applications in retail, healthcare, and other industries. I am particularly interested in applications that make use of Rekognition’s ability to find and transcribe text in images and videos and to identify objects.

Finally

If you are interested in applications in machine learning and artificial intelligence, there is a path for you to get started. There is a body of work you can use as a starting point and reasonably priced hardware you can use to interact with the world. Once you get started you will start to see how these systems are composed. You will feel increasingly comfortable with doing more difficult and challenging things. Then you can branch out and maybe create something that will change the world!