Choosing the wrong multi-agent framework is the leading cause of agentic AI project failure, and the decision is almost always made before a single agent is built. This blog post gives IT leaders a practical rubric for selecting the right framework for legacy modernization.

In brief:

- GitHub Copilot and Claude Code excel at AI-assisted coding, but legacy modernization requires an orchestration layer above individual coding assistants that coordinates agents, manages shared context, and controls costs across complex, multiphase workflows.

- Five failure patterns account for most agentic AI project cancellations: do-everything agents, brittle autonomy, information chaos, inflexible architectures, and runaway costs. Each one is preventable with the right framework decision.

- Off-the-shelf frameworks like CrewAI, LangGraph, and AutoGen help you develop prototypes quickly but were designed as general-purpose engines, not for the specific demands of legacy modernization.

- Hybrid or domain-specific frameworks are purpose-built orchestration layers with modular, swappable agents that best mitigate the five failure patterns and adapt to your business processes rather than forcing you to adapt to theirs.

- Multi-agent modernization isn’t right for every situation, and small codebases, heavy regulatory constraints, governance immaturity, or severely undocumented systems may require foundational work before agents can deliver value.

Multi-agent frameworks promise to transform how you modernize legacy applications. But Gartner predicts that over 40 percent of agentic artificial intelligence (AI) projects will be canceled before they deliver value — casualties of escalating costs, unclear business value, and inadequate risk controls. The issue lies in choosing the wrong framework for the wrong problem.

In our experience leading enterprise modernization engagements, most agentic AI project failures trace back to architectural decisions made before a single agent was built. Five specific failure patterns, which we’ll use as evaluation criteria throughout this blog post, account for most of these cancellations. And every one of them is preventable with the right multi-agent framework decision.

The Multi-Agent Framework Selection Problem Nobody’s Talking About

Before we talk about multi-agent frameworks, let’s address what most information technology (IT) leaders reach for first: GitHub Copilot and Claude Code. These are the tools your teams already know, and for good reason.

GitHub Copilot offers deep integrated development environment (IDE) integration and fast inline suggestions across 20-plus languages. Claude Code brings exceptional reasoning on complex codebases, a million-token context window, and the ability to read, write, and refactor entire file structures autonomously.

Both are evolving rapidly.

As of early 2026, GitHub now offers Claude and OpenAI’s Codex as coding agents directly within the Copilot platform, and with Claude Code’s experimental Agent Teams feature, multiple Claude instances can work in parallel on different parts of a codebase.

So why not just use these tools to modernize your legacy systems?

There’s a fundamental difference between AI-assisted coding and AI-orchestrated modernization. GitHub Copilot and Claude Code excel at the coding layer: refactoring files, generating tests, explaining legacy logic, and translating between languages. But a legacy modernization project requires more than independent coding tasks. Legacy modernization involves a coordinated workflow that spans code discovery, requirements extraction, architecture redesign, development, testing, and validation. Each of those phases produces artifacts and context that the next phase depends on.

That coordination layer — deciding which agent works on what, managing shared context across phases, adapting behavior as the codebase reveals surprises, and controlling costs across dozens of parallel operations — is what a multi-agent framework provides. It’s the orchestration above the individual coding assistant.

With the coding assistant question settled, the real evaluation begins with the framework landscape.

5 Failure Patterns of Multi-Agent Frameworks

There are plenty of multi-agent framework options to choose from. The challenge is knowing which one fits your modernization scenario. To choose well, you need to understand what goes wrong. Across dozens of agentic AI engagements, we’ve identified five architectural failure patterns:

- Do-everything agents overload a single agent with too many responsibilities, creating a brittle system that can’t scale.

- Brittle autonomy occurs when agents can’t adapt their behavior without code changes as workflow conditions shift.

- Information chaos emerges from poor context management, such as agents drowning in stale or irrelevant data.

- Inflexible architectures force your business processes to conform to the framework rather than the reverse.

- Runaway costs compound silently as token usage, compute, and integration expenses outpace projected return on investment (ROI).

These failure patterns give you a rubric for what to look for in the right multi-agent framework.

Build, Buy, or Hybrid: The Multi-Agent Framework Decision That Shapes It All

The question is structural: Do you adopt an off-the-shelf multi-agent framework, build a custom one from scratch, or take a hybrid approach that customizes on top of a purpose-built orchestration layer?

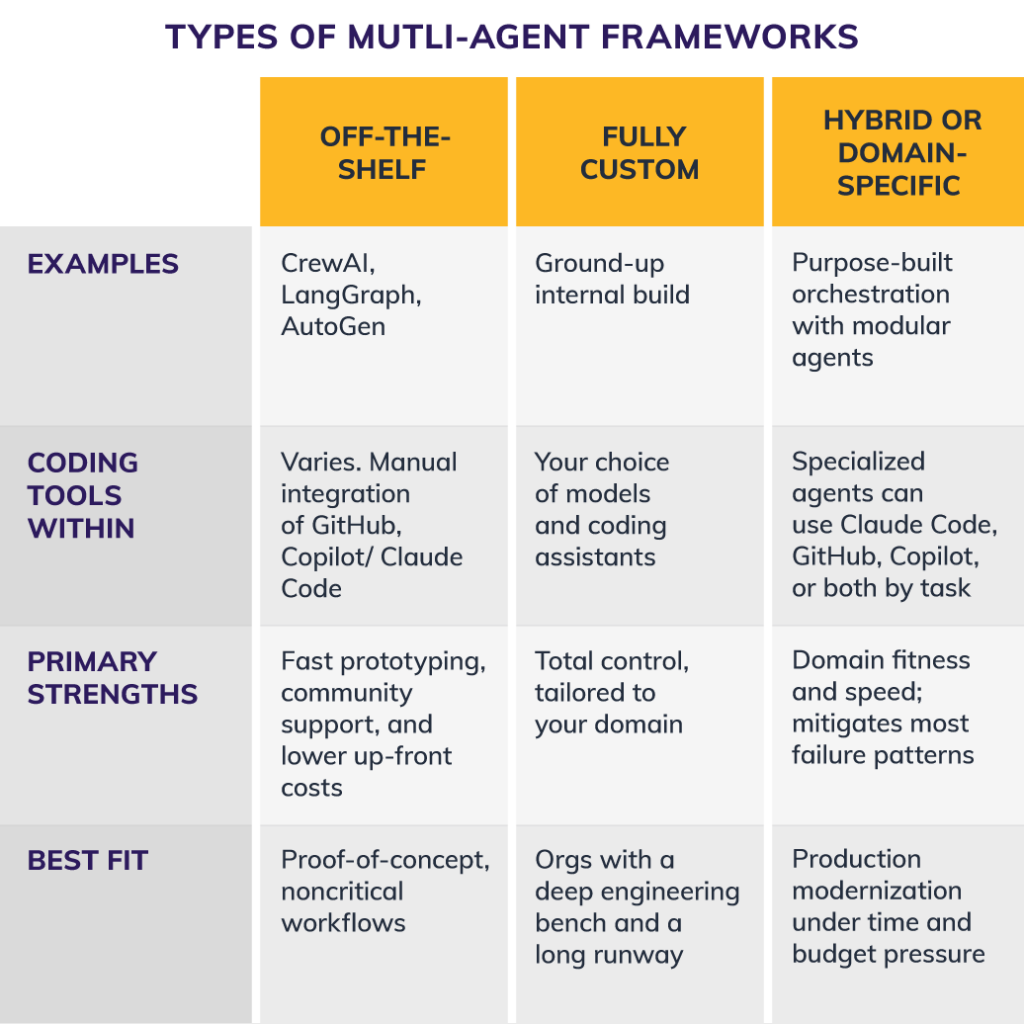

Comparing types of multi-agent frameworks: off-the-shelf, fully custom, and hybrid or domain-specific.

Off-the-Shelf

Off-the-shelf multi-agent frameworks get you building fast. But these tools were designed as general-purpose orchestration engines instead of the specific demands of legacy modernization. Notably, while you can wire a coding assistant into these frameworks, the integration work is manual, and the framework itself doesn’t know how to use those coding tools across a modernization workflow.

Fully Custom

Fully custom multi-agent frameworks build offer maximum control, but they carry the heaviest risk of runaway costs. Building multi-agent orchestration, context management, cost controls, and governance from scratch is a significant undertaking. In our experience, most organizations underestimate the timeline by a factor of two or more. Unless you have a deep engineering bench and a long runway, a ground-up build creates the very technical debt you’re trying to eliminate.

Hybrid or Domain-Specific

Hybrid approaches are purpose-built orchestration layers with modular, swappable agents that mitigate the most failure patterns when the orchestration layer is designed for your domain. These architectures can assign different AI models and coding tools to different agents based on the task. The key differentiator is whether the framework adapts to your business processes rather than forcing you to adapt to it.

Now that you’ve got an idea of what type of multiagent framework would work best for your organization, here’s how to further narrow down your choice.

Testing a Multi-Agent Framework for Legacy Modernization: 5 Questions to Ask

When evaluating any multi-agent framework for legacy modernization, apply these five tests:

1. Does It Support True Multi-Agent Orchestration?

If the framework routes everything through a single agent, you’re building a do-everything agent that will collapse under complexity. Individual coding assistants are powerful, but modernization requires distributing discrete responsibilities across specialized agents with clear boundaries.

2. Can Agent Instructions Adapt by Workflow State Without Code Changes?

Legacy codebases surface surprises constantly, like undocumented business logic, shifting code patterns, and emerging dependencies. Your framework needs agents that adjust their approach as context evolves, not agents that break when conditions deviate from the original prompt.

3. Does It Manage Context Intelligently?

Agents need the right information at the right time, not a firehose of every prior interaction. This is where individual coding tools hit their limits: Claude Code’s million-token context window is formidable, but when 22 agents are working in parallel across a complex codebase, what each agent sees needs to be scoped, sequenced, and curated by the framework.

4. Does It Adapt to Your Business Processes or Force You to Adapt to It?

This separates genuinely flexible architectures from those that impose their own workflow assumptions. For legacy modernization, where every codebase has unique characteristics, framework rigidity is a project killer.

5. Does It Provide Cost Visibility and Token-Level Controls?

A single GitHub Copilot session is predictable. Dozens of agents making parallel application programming interface (API) calls across multiple models is not possible unless the framework provides granular visibility into what each agent is consuming.

Choosing the right multi-agent framework matters, but how much does it actually change the outcome? Let’s look at the numbers.

The ROI Math: Agent-Augmented vs. Traditional Modernization

In a recent legacy modernization engagement, a single senior architect working with 22 specialized AI agents delivered 100 percent feature parity and 86 percent automated test coverage in two weeks. The traditional estimate for the same scope: 16 weeks with a five-person team. That’s an 86 percent compression in time-to-production, and the resource math is just as stark.

The traditional approach required five full-time engineers with deep knowledge of the legacy codebase. The agent-augmented approach required one architect who understood the business domain and could direct specialized agents through code discovery, requirements extraction, redesign, development, and testing.

These results align with broader patterns across engagements: 30–50 percent cost savings, 30–80 percent faster timelines, and 10–20 times the productivity gains per resource.

But I want to be precise about what these numbers represent. They are ranges, not guarantees. They depend on framework fitness, codebase complexity, and organizational readiness, which is exactly why the framework decision matters so much.

When NOT to Use AI Agents for Legacy Modernization

The mark of genuine expertise is knowing what not to build. Here are the conditions where I’d steer a client away from a multi-agent modernization approach, even if they’re already sold on the technology.

1. Small, Simple Codebases

If you’re modernizing a 10,000-line application with clear documentation and active developers who understand it, the overhead of standing up a multi-agent framework isn’t justified.

2. Heavy Regulatory Constraints Requiring Deterministic Transformation

When every line of code needs to be traced to a specific requirement with full audit provenance, agents introduce a validation burden that can exceed the productivity gains. This applies particularly in safety-critical systems where the cost of an undetected error is catastrophic.

3. Governance Immaturity

If your organization doesn’t have the governance structure to validate AI-generated outputs systematically, you’re not ready for agent-augmented development at scale. Deploying agents without the governance to match creates risk that’s even harder to unwind than the technical debt you’re trying to eliminate.

4. Systems So Poorly Documented That Even Agents Can’t Reverse-Engineer Requirements

AI agents are remarkably capable at code discovery and pattern recognition, but they’re not magic. If the legacy system has zero documentation, no test suites, departed developers, and convoluted architecture, agents will struggle just as much as humans would, but faster and with more confidence. That’s a dangerous combination.

In each of these cases, the most valuable move is better preparation. Agents amplify what’s already there, which means the quality of your foundation determines the quality of your results.

Make the Best Framework Decision for the Right Outcome

The sweet spot for agent-augmented modernization is in large, complex codebases where the original developers have departed, business logic is embedded but undocumented, technical debt has accumulated over years, and there’s pressure to modernize fast.

The multi-agent framework you choose today determines whether your modernization project joins the 40 percent that Gartner predicts will be canceled, or the cohort that delivers production results in weeks, not quarters.

Donavan Stanley, senior architect of AI agents and LLMs at Centric Consulting, said, “Speed without verification is a liability. Sometimes the right answer is to invest in governance and documentation first, then revisit modernization with agents once the foundation supports it.”

Our AI agent development experts know how to identify the right multi-agent framework for your modernization challenge and how to build for production, not just proof of concept. Learn more.