Your legacy modernization strategy probably hasn’t changed much, but the economics behind it have. AI agents are making options that once felt out of reach worth reconsidering, starting with the applications you’ve been putting off for years. In this blog post, we break down where AI agents deliver real value in legacy modernization, where they don’t, and what that means for your portfolio.

In brief:

- The 7 Rs framework remains the right foundation for legacy system modernization, but AI agents are changing the cost and timeline assumptions behind which Rs are actually viable.

- AI agents deliver the highest impact in rebuild/replace, refactor, and rearchitect scenarios because they compress discovery and transformation work that has historically made these options cost-prohibitive.

- Agent-augmented modernization can reduce rewrite costs by 30–50 percent and compress timelines by 50–80 percent, making applications that have been languishing in the retain phase realistic candidates for modernization.

- Agents are comprehension tools that can analyze codebases, extract undocumented business logic, and map dependencies before any transformation begins.

- The human-in-the-loop imperative holds: Agents handle the analytical and generative work, while you provide business context, validate outputs, and make the strategic calls that matter most.

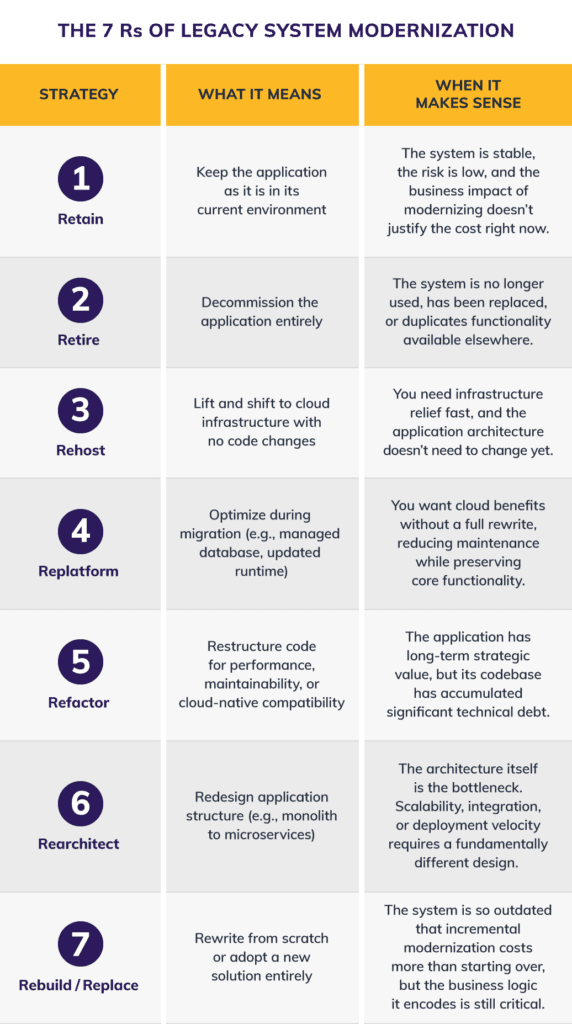

Legacy system modernization isn’t a new problem, but the playbook for solving it is changing quickly. The 7 Rs framework (retain, retire, rehost, replatform, refactor, rearchitect, and rebuild/replace) has guided modernization strategy for years. And it’s still the right starting point.

What’s different now is that artificial intelligence (AI) agents are shifting the economics of which Rs make sense and when, turning rewrites that were once too expensive and time-consuming into the most practical path forward.

This blog post is a practitioner’s assessment of where AI agents deliver real value in legacy system modernization today, where they don’t, and how the cost-benefit math has changed for organizations sitting on significant technical debt.

What Hasn’t Changed About the Legacy System Modernization Problem

The numbers haven’t improved. Industry reports estimate that maintaining legacy systems hinders up to 88 percent of enterprises. The global application modernization market is projected to grow from roughly 17.80 billion in 2023 to 52.46 billion by 2030 at a compound annual growth rate (CAGR) of 16.7 percent. This data shows how much technical debt has accumulated and how urgently organizations need to address it.

But legacy app modernization projects continue to stall for the same reasons they always have:

- The cost is prohibitive

- The risk is unclear

- Institutional knowledge has walked out the door

- Critical business logic is undocumented

- The people who originally built these systems are retiring faster than they can transfer what they know

The result is a growing gap between what the business needs and what the technology can deliver.

The 7 Rs framework isn’t broken. It’s still the most practical way to evaluate a portfolio of legacy applications and match each one to the right modernization path. But what has been static for too long is the set of assumptions behind “which R.”

For at least a decade, those assumptions have been driven by the same variables: team size, timeline, budget, risk tolerance. AI agents introduce a new variable. While they don’t change the underlying strategies, they do change the economics of deciding which strategies are viable for your organization.

The 7 Rs of Legacy System Modernization: A Quick Refresher

Originally shaped by Gartner’s migration research and expanded by AWS and others, the 7 Rs provide a structured decision framework for evaluating each application in your portfolio. Each R represents a different depth of intervention and a different trade-off between cost, risk, and long-term value.

The 7 Rs of Legacy System Modernization: a practical framework to decide when to retain, retire, rehost, refactor, or fully rebuild legacy applications based on business impact and technical need.

These Rs have been the right framework for years. The question is: With AI agents in the mix, how does the cost-benefit analysis for each one shift?

Where AI Agents Fit in Legacy Modernization — and Where They Don’t

Not every R benefits equally from AI agents. Being honest about where agents add real value, and where they don’t, is how you avoid the hype-driven project failures that Gartner predicts will claim over 40 percent of agentic AI initiatives by 2027.

Here’s our assessment based on early successes in leading these engagements.

High Impact: Rebuild/Replace, Refactor, Rearchitect

These are where AI agents change the return on investment (ROI) equation most dramatically because they address the two factors that have historically made these Rs prohibitively expensive: discovery and transformation.

Before you can rewrite, refactor, or rearchitect anything, you need to understand what the existing system actually does.

That means reverse-engineering undocumented business logic, mapping dependencies, and extracting implicit requirements from code written by people who left the organization years ago. This discovery work has traditionally required senior engineers to spend weeks or months reading code line by line. It’s expensive, slow, and often incomplete.

AI agents compress this dramatically. Specialized agents can analyze legacy codebases, extract business rules, map data flows, identify dependencies, and generate documentation that would take a human team weeks to produce in hours. And critically, AI agents don’t just generate code. The real value lies in their ability to understand what a system does before transforming it.

“Most organizations treat AI agents as code generators, give it the old code, and get new code back. That’s a fraction of the value. Agents are comprehension tools, not just generation tools,” says Donavan Stanley, Centric Consulting senior architect of AI agents and LLMs.

For rebuild/replace, agents can handle code discovery, extraction, architecture design, development, and test generation as a coordinated workflow. Rewrites that were once cost-prohibitive (because discovery alone ate half the budget) become viable when agents compress that phase from months to days.

For refactor, agents reduce the risk that has historically made refactoring terrifying: the fear of breaking something you don’t fully understand. When agents can analyze the existing codebase, generate comprehensive test coverage, and validate that restructured code preserves business behavior, refactoring becomes a disciplined engineering exercise rather than a high-stakes gamble.

For rearchitect, agents can decompose monolithic systems by identifying service boundaries, mapping data ownership, and generating the scaffolding for a microservices architecture — work that traditionally requires experienced architects spending weeks in front of whiteboards.

Moderate Impact: Replatform

Replatforming involves targeted optimizations during migration, updating a database engine, moving to managed services, and adjusting configurations for a cloud runtime.

AI agents can assist with dependency analysis, configuration mapping, and automated testing of the replatformed application. The impact is real but narrower than the code-intensive Rs above because the scope of change is smaller by design.

Low Impact: Retain, Retire, Rehost

These Rs are fundamentally business and infrastructure decisions, not code transformation challenges:

- Retain is a strategic choice to accept current technical debt.

- Retire is a business decision to decommission.

- Rehost is an infrastructure move that doesn’t involve changing application code.

AI agents don’t add much value here because the bottleneck isn’t code comprehension or transformation — it’s organizational decision-making and infrastructure operations. Agents aren’t going to decide which applications to retire. That’s a human judgment call, grounded in a nuanced business context that no agent has access to.

The New Math: How Agents Change the ROI Calculation

Here’s the practical shift. If you’re an information technology (IT) leader with a portfolio of legacy applications, you’ve probably already categorized them using the 7 Rs, or something like it. Some were tagged as “retain” not because they’re healthy, but because the cost of rewriting them couldn’t be justified. Some were tagged as “refactor,” but the project was never funded because the risk profile was too high.

Legacy modernization with AI changes that.

When the cost of a rewrite drops 30–50 percent and the timeline compresses by 50–80 percent, the threshold for justifying a rebuild drops too. Applications that have been sitting in the “retain” column for years — accumulating technical debt, consuming maintenance budget, blocking innovation — may now be viable for rebuild or refactor.

Consider the before and after. A legacy application rewrite using traditional approaches typically requires 12–16 weeks with a development team, assuming you can find engineers who understand the legacy codebase.

In a recent engagement, one senior architect working with 22 specialized AI agents delivered 100 percent feature parity and 86 percent automated test coverage in two weeks. The agents handled code discovery, requirements extraction, architecture redesign, development, and testing. The architect provided the business context, validated outputs, and made strategic calls that required human judgment.

That’s a fundamental shift in which Rs are economically viable. The most common mistake I see is leaders evaluating AI-augmented modernization against the cost of a traditional rewrite.

That’s the wrong comparison. The real comparison is the cost of the rewrite versus the cost of doing nothing, the ongoing maintenance spend, the innovation you can’t pursue because the legacy system is consuming your engineering capacity, and the competitive risk of operating on a technology stack that can’t integrate with modern tools.

Here’s how to decide which applications are candidates for agent-augmented modernization:

- Code Complexity and Size: Larger, more complex systems benefit most from agent-assisted discovery

- Documentation Quality: The less documentation exists, the more value agents provide in reverse-engineering requirements

- Available Institutional Knowledge: When the original developers have departed, agents fill a gap that humans can’t

- Business Criticality: High-value systems justify the investment in agent-augmented approaches

- Compliance Requirements: Heavily regulated systems with strict audit needs may require more human-intensive approaches

There are also scenarios that do not call for agent-augmented modernization:

- Small, well-documented applications with active development teams don’t need the overhead of a multi-agent framework.

- Systems requiring deterministic, fully auditable transformation may not be ready for probabilistic AI outputs.

- Organizations without governance maturity to validate agent-generated code should invest in that foundation first. Speed without verification is a liability.

Now that you understand how AI impacts legacy modernization strategies, let’s discuss how to use the 7 Rs to evaluate your portfolio.

What AI Means for Your Legacy Modernization Strategy

AI agents change the economics but not the strategy. The 7 Rs are still the right framework for evaluating your legacy portfolio.

What’s changed is which Rs are affordable and practical for applications that have been languishing in “retain” because the alternatives were too expensive.

If you’re sitting on a portfolio of legacy systems like most enterprises are, here’s what I recommend as a starting point:

- First, audit your legacy portfolio against the 7 Rs with fresh eyes, specifically revisiting every application tagged as “retain” and asking whether the cost assumptions that drove that decision still hold.

- Second, identify two or three applications where the rewrite ROI has shifted most dramatically — large, complex codebases with departed subject matter experts, undocumented business logic, and mounting technical debt.

- Third, start with a contained pilot. Pick one application — not the most critical one — and test the agent-augmented approach against your traditional estimates. Let the results speak for themselves.

Through all of this, the human-in-the-loop imperative holds:

- AI agents handle the intensive analytical and generative work, code comprehension, requirements extraction, test generation, and transformation.

- Humans provide the business context, validate outputs against intent, and make strategic calls that require judgment, empathy, and organizational knowledge.

It’s human and machine intelligence working together on problems neither could solve alone at this speed or scale.

The legacy modernization problem hasn’t changed. But for the first time in over a decade, the math has.

AI presents a real opportunity, but most initiatives stall because they start with the technology instead of the problem. Learn more about our practical approach to AI.

Wondering which of your legacy applications are candidates for legacy modernization with AI? Connect with our team to discuss your portfolio.